Shaping the Future of Responsible AI

AI governance, ethics, and compliance solutions for businesses, governments, and innovators.

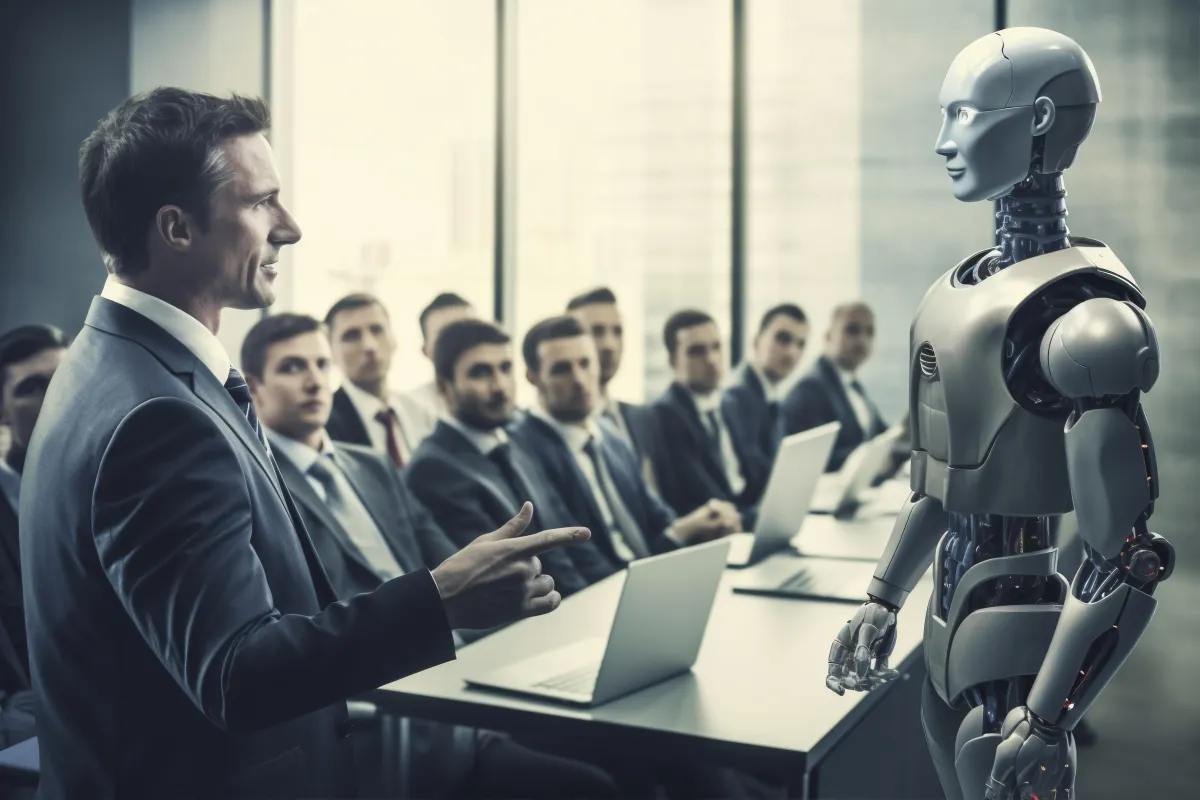

AI is powerful but without governance, it’s risky

AI is powerful but without governance, it’s risky

As AI integrates into decision-making, businesses and governments face increasing regulatory scrutiny. Ethical AI is no longer optional it’s essential for compliance, trust, and innovation.

☞ AI Regulations Are Here – Stay compliant with global AI laws (EU AI Act, US AI Bill of Rights).

☞ Bias & Transparency Issues – Ensure fair, responsible, and accountable AI development.

☞ Future-Proof Your AI – Build AI systems that are ethical, compliant, and scalable.

Master the Legal Future of AI

Three expert-designed courses to help you master AI ethics, legal challenges, and policy innovations at your own pace.

AI & Law Online Course

$27

Responsible AI Online Course

$27

Who Owns Intelligence Online Course

$27

WHAT WE OFFER

Comprehensive AI Governance Solutions

AI Ethics & Governance Consulting

Provide clients with comprehensive career assessments to identify their strengths, interests, and goals. Offer personalized career planning sessions where you help clients define their career path, set achievable goals, and develop action plans to reach them.

AI Risk Assessment & Bias Audits

Assist clients in crafting professional resumes and optimizing their LinkedIn profiles. Offer services to highlight their skills and achievements, making them stand out to potential employers. Provide resume templates or samples as resources.

Regulatory Compliance & AI Law Advisory

Conduct mock interview sessions to help clients improve their interviewing skills. Offer feedback on their responses, body language, and overall interview performance. Share tips and strategies for handling different types of interviews, including behavioral and technical interviews.

AI Governance Strategy Development

Develop a tailored job search strategy for each client, including guidance on networking, utilizing job search platforms, and targeting specific industries or companies. Provide resources and templates for cover letters, thank-you notes, and follow-up emails.

Government & Public Policy Advisory

Conduct mock interview sessions to help clients improve their interviewing skills. Offer feedback on their responses, body language, and overall interview performance. Share tips and strategies for handling different types of interviews, including behavioral and technical interviews.

Facing Legal Questions?

Let’s Find Clear Answers Together

Whether you're facing a legal challenge or planning ahead, our experts are here to help. Get personalized advice tailored to your needs no pressure, just clarity.

Book a Free Consultation Today!

Let’s turn your questions into confident decisions.

Stay Ahead of AI Regulation Changes

Live Regulation Updates

EU AI Act Updates

New compliance deadlines for AI developers.

March 2025

US AI Bill of Rights

White House releases new AI accountability guidelines.

Feb 2025

China’s AI Regulation

Stricter guidelines for deep fake technology.

Jan 2025

Become a Certified AI Ethics Professional

Develop expertise in AI governance, compliance, and responsible AI practices. Our certification programs are designed for professionals, compliance officers, and businesses looking to lead in ethical AI.

AI Ethics in Action Lessons from Real World Cases

Ensuring Accuracy and Reliability in AI-Powered Legal Work

Accuracy and Reliability of AI in Legal Practice: Why Verification Matters More Than Ever

Introduction

Artificial Intelligence (AI) has rapidly become an indispensable ally for lawyers — streamlining research, automating documentation, and improving efficiency. Yet, as courts worldwide are discovering, even the most sophisticated AI tools can make confident but completely false claims. The result? Professional embarrassment, ethical violations, and even legal sanctions.

This post explores real-world cases where AI errors caused serious consequences, why these hallucinations happen, and how legal professionals can adopt governance practices to ensure accuracy and reliability in the age of intelligent automation.

1. When AI Hallucinations Cost Lawyers

In recent years, multiple legal professionals across jurisdictions have faced sanctions for submitting documents containing fabricated case citations generated by AI tools like Claude, Microsoft Copilot, and ChatGPT.

Australia: In Western Australia, a lawyer relied on AI-generated case law for an immigration case, only to discover that four cited cases didn’t exist. The court imposed an $8,371.30 fine and referred the matter to the legal regulator (The Guardian, 2025).

United Kingdom: The High Court of England and Wales reported multiple cases with fake citations — 18 in one claim and five in another. Judges warned that misuse of AI could lead to contempt proceedings and erode public trust in the legal system (The Guardian, 2025).

United States: From Morgan & Morgan’s disciplinary action in a Walmart case to a federal judge withdrawing a ruling after discovering AI-generated citation errors, the problem has gone global. Even Anthropic’s Claude AI produced an inaccurate reference in a copyright case, proving that no platform is immune to hallucination.

These examples highlight that AI is not a substitute for professional reasoning — and unverified reliance can have severe consequences.

2. How Often Do Legal AI Tools Get It Wrong?

Despite major advancements, studies continue to reveal alarming error rates:

Research by Chen et al. (2024) found that Lexis+ AI and Westlaw’s AI-assisted tools hallucinate 17–33% of the time, even when using Retrieval-Augmented Generation (RAG).

Another study by Zhong et al. (2024) reported that ChatGPT-4 hallucinated in 58% of legal queries, while Llama 2 reached a staggering 88% error rate when answering verifiable questions about federal court cases.

These findings underscore that even well-engineered AI systems remain prone to producing plausible but false outputs — a phenomenon that can have serious consequences in legal contexts where precision is paramount.

3. Why AI Misleads — and Why We Still Trust It

AI hallucination occurs when a model “confabulates” — producing outputs that appear factual but are actually fabricated.

Psychologically, this links to what researchers call the “AI trust paradox.” As AI language becomes more fluent and human-like, users tend to over-trust its answers — even when they’re wrong.

In the legal profession, where authority and credibility are crucial, this misplaced trust can lead to overreliance and negligence. Lawyers may unconsciously assume that a well-written output equals an accurate one, blurring the line between assistance and authorship.

4. Ethical and Professional Responsibilities

Not verifying AI outputs isn’t just careless — it can breach ethical duties.

The American Bar Association (ABA) issued an opinion in 2023 reaffirming that attorneys are fully responsible for any AI-generated material they submit. Courts in the U.S., UK, and Australia have all echoed this position, emphasizing that AI tools cannot bear accountability — only humans can.

Neglecting verification may constitute professional misconduct, risking disciplinary action or reputational damage. AI must therefore be treated as an assistant, not an authority.

5. Building Reliable Legal AI: Frameworks for the Future

The path forward lies in combining human expertise with transparent, auditable AI design. Emerging frameworks integrate:

Expert systems for rule-based validation.

Knowledge graphs to cross-check citations and legal sources.

Retrieval-Augmented Generation (RAG) to ground answers in verified databases.

Reinforcement Learning from Human Feedback (RLHF) to reduce bias and improve contextual accuracy.

In parallel, law firms must introduce AI governance policies, including:

Staff training and awareness programs.

Documented verification procedures.

Data Protection Impact Assessments (DPIAs).

Oversight committees for ethics and compliance.

These measures form the foundation for trustworthy AI use across the legal ecosystem.

Conclusion

AI is transforming the legal profession, but speed should never come at the expense of accuracy. The recent wave of AI-related legal missteps reveals a simple truth: technology can assist, but never replace, human judgment.

Lawyers who embrace AI responsibly — verifying outputs, following governance protocols, and upholding ethical standards — will not only protect their clients but also shape the next era of credible, AI-enhanced legal practice.

Accuracy and reliability are not optional; they are the new pillars of digital professionalism.

References

The Guardian (2025, Aug 20). WA lawyer referred to regulator after preparing documents with AI-generated case citations that did not exist.

The Guardian (2025, Jun 6). High court tells UK lawyers to urgently stop misuse of AI in legal work.

Reuters (2025, Feb 18). AI hallucinations in court papers spell trouble for lawyers.

The Verge (2025, Feb 14). Judge withdraws Cormedix case after AI citation errors.

Business Insider (2025, May 9). Anthropic’s Claude AI produced inaccurate citation in copyright case.

Chen M. et al. (2024). Hallucination rates in legal AI systems. arXiv.

Zhong R. et al. (2024). Measuring hallucination in large language models. arXiv.

ABA (2023). Ethics Opinion 512: Use of Artificial Intelligence in Law Practice.

Stay Ahead with NexterLaw

Be the first to know about legal trends, expert tips, and our latest services. We deliver real value – no spam, just smart insights and useful updates. Subscribe to our newsletter and stay informed, protected, and empowered.

We respect your inbox. Unsubscribe anytime.

COMPANY

Quick Links

Copyright 2026. Nexterlaw. All Rights Reserved.